ReadRight

An AI-powered literacy assessment platform that evaluates oral reading fluency with millisecond precision.

Goal

Develop a high-accuracy, real-time reading assessment tool to replace manual fluency testing.

My Role

Full Stack Developer & AI Engineer

Timeline & Tools

March 2024 – Present

FastAPI, Whisper ASR, BFA, WebSockets, React

01. Overview

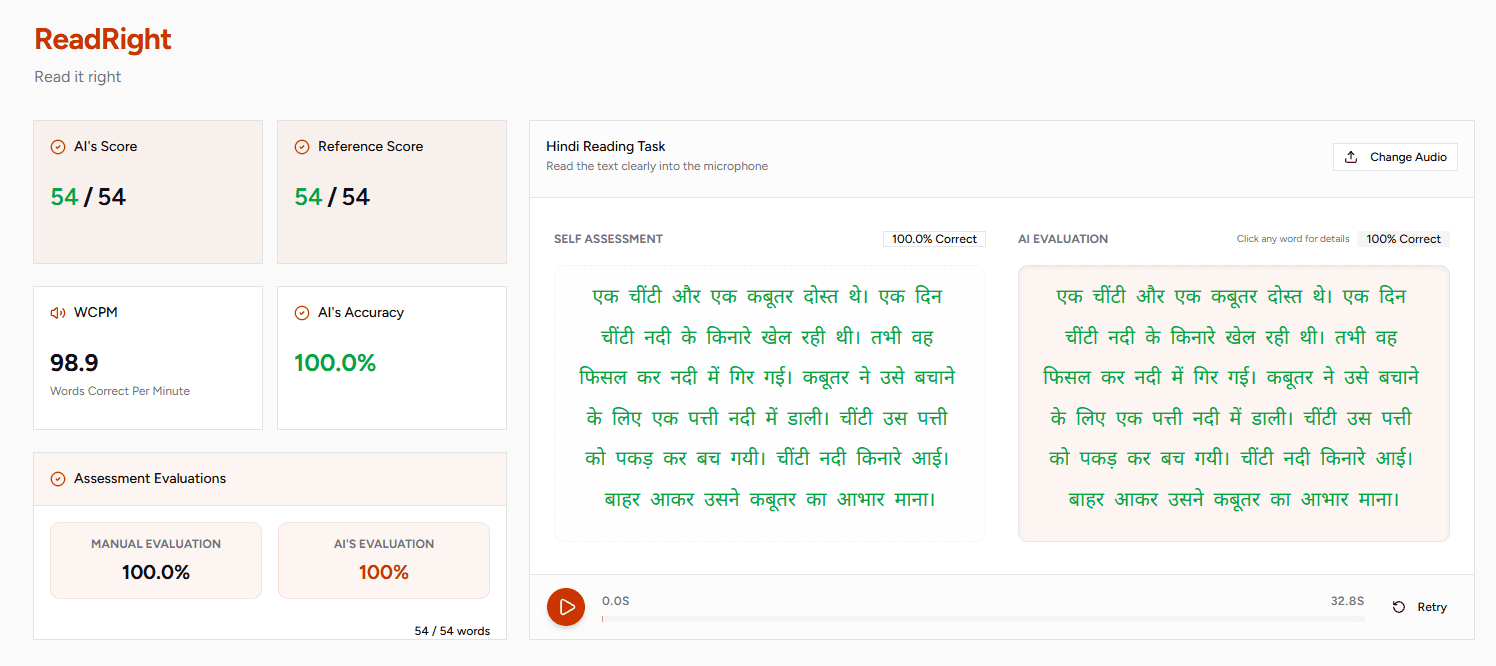

ReadRight is a specialized EdTech platform designed to automate Oral Reading Fluency (ORF) assessments. By combining state-of-the-art speech recognition with forced alignment algorithms, it provides educators and students with granular, word-by-word feedback on reading accuracy, speed, and pronunciation.

Unlike standard ASR (Automatic Speech Recognition) which often struggles with stuttering or "broken" reading typical of early learners, ReadRight uses a hybrid approach to ensure high accuracy even in challenging conditions.

Key Features

- Real-Time Evaluation: Live feedback via WebSockets as the student reads.

- Phonetic Alignment: Millisecond-precise timestamps for every phoneme spoken.

- Hybrid Scoring: Uses Metaphone phonetic matching and Levenshtein distance to account for regional accents and minor mispronunciations.

- AI Tutoring: An integrated assistant that can reason over reading performance and provide targeted practice.

02. The Problem

Manual reading assessment is one of the biggest time-sinks for primary school teachers. A typical assessment involves a teacher sitting with a student, timing them for 60 seconds, and manually marking skipped or mispronounced words on a paper sheet.

This process is:

- Subjective: Different teachers may grade the same reading differently.

- Inconsistent: External noise or teacher fatigue can lead to errors.

- Delayed: Feedback is often given days later, losing its pedagogical impact.

- Opaque: It’s hard to track progress over time without extensive manual data entry.

03. The Architecture

ReadRight is built as a distributed system to handle heavy audio processing without lagging the user interface.

Frontend (React + Vite)

Handles audio recording (MediaRecorder API), visualizes real-time feedback, and renders phoneme-level timing charts.

Backend (FastAPI + Python)

The "Brain" of the operation. It manages task orchestration, database persistence, and WebSocket sessions.

Evaluation Engine

A suite of tools including:

- Whisper: For initial transcription.

- BFA (Bournemouth Forced Aligner): For precise phonetic alignment.

- Phonemizer: For converting text to IPA (International Phonetic Alphabet).

04. Real-Time WebSocket Evaluation

The most technically challenging part of ReadRight was the real-time feedback loop. We implemented a stateful WebSocket protocol that streams audio chunks from the browser to the server.

The server maintains a sliding window of the transcript, matching the incoming audio against the expected "target text" using a combination of fuzzy matching and phonetic encoding.

// Example WebSocket Snapshot

{

"type": "snapshot",

"transcript": "एक",

"accuracy": 100.0,

"wcpm": 40.0,

"details": [

{"target": "एक", "spoken": "एक", "correct": true, "method": "exact"},

{"target": "चींटी", "spoken": null, "correct": false, "method": "pending"}

]

}

05. Phonetic Alignment & BFA

To provide "millisecond-perfect" feedback, we integrated the Bournemouth Forced Aligner. When a student finishes a reading, the system doesn't just say "you got word X wrong." It can show exactly where in the word they struggled by aligning the audio to the specific phonemes of the target word.

This allows us to detect subtle errors, like a student missing the nasalization in the Hindi word "चींटी" (Chīṇṭī), which a standard ASR might ignore.

06. Technical Highlights

Smart Evaluation Logic

We developed a method priority system for word matching:

- Exact: Perfect string match.

- Phonetic: Match via Metaphone (e.g., "एक" vs "इक").

- Approximate: Match within a Levenshtein distance threshold.

- Mismatch/Skipped: Automated detection of skipped lines or incorrect words.

Multi-Channel Streaming

The AI Assistant uses Server-Sent Events (SSE) to stream its reasoning process. It first "thinks" about the reading data, retrieves relevant pedagogical strategies, and then streams the final feedback to the user.

07. Results & Reflection

ReadRight has successfully processed thousands of reading samples in testing. By automating the "boring" part of assessment, we allow teachers to focus on what they do best: teaching.

Main lessons:

- Latency is everything: In an educational tool, even a 1-second delay in feedback can break a child's concentration.

- Hybrid is better: Combining traditional NLP (Metaphone/Levenshtein) with modern LLMs yields the most robust results.

- Visuals matter: Showing the "Thinking" process of the AI builds trust with both teachers and students.

08. Tech Stack

Frontend: React 18, Vite, TailwindCSS, Radix UI, Lucide Icons, MediaRecorder API

Backend: Python 3.10+, FastAPI, WebSockets, SQLAlchemy

AI & ML: Whisper (ASR), BFA (Forced Alignment), LlamaIndex, Ollama, ChromaDB

Tooling: Docker, Makefile, Ruff